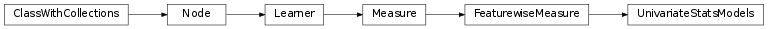

mvpa2.measures.statsmodels_adaptor.UnivariateStatsModels¶

-

class

mvpa2.measures.statsmodels_adaptor.UnivariateStatsModels(exog, model_gen, res='params', add_constant=True, **kwargs)¶ Adaptor for some models from the StatsModels package

This adaptor allows for fitting several statistical models to univariate (in StatsModels terminology “endogeneous”) data. A model, based on “exogeneous” data (i.e. a design matrix) and optional parameters, is fitted to each feature vector in a given dataset individually. The adaptor supports a variety of models provided by the StatsModels package, including simple ordinary least squares (OLS), generalized least squares (GLS) and others. This feature-wise measure can extract a variety of properties from the model fit results, and aggregate them into a result dataset. This includes, for example, all attributes of a StatsModels

RegressionResultclass, such as model parameters and their error estimates, Aikake’s information criteria, and a number of statistical properties. Moreover, it is possible to perform t-contrasts/t-tests of parameter estimates, as well as F-tests for contrast matrices.Notes

Available conditional attributes:

calling_time+: Nonenull_prob+: Nonenull_t: Noneraw_results: Nonetrained_dataset: Nonetrained_nsamples+: Nonetrained_targets+: Nonetraining_time+: None

(Conditional attributes enabled by default suffixed with

+)Examples

Some example data: two features, seven samples

>>> endog = Dataset(np.transpose([[1, 2, 3, 4, 5, 6, 8], ... [1, 2, 1, 2, 1, 2, 1]])) >>> exog = range(7)

Set up a model generator – it yields an instance of an OLS model for a particular design and feature vector. The generator will be called internally for each feature in the dataset.

>>> model_gen = lambda y, x: sm.OLS(y, x)

Configure the adaptor with the model generator and a common design for all feature model fits. Tell the adaptor to auto-add a constant to the design.

>>> usm = UnivariateStatsModels(exog, model_gen, add_constant=True)

Run the measure. By default it extracts the parameter estimates from the models (two per feature/model: regressor + constant).

>>> res = usm(endog) >>> print res <Dataset: 2x2@float64, <sa: descr>> >>> print res.sa.descr ['params' 'params']

Alternatively, extract t-values for a test of all parameter estimates against zero.

>>> usm = UnivariateStatsModels(exog, model_gen, res='tvalues', ... add_constant=True) >>> res = usm(endog) >>> print res <Dataset: 2x2@float64, <sa: descr>> >>> print res.sa.descr ['tvalues' 'tvalues']

Compute a t-contrast: first parameter is non-zero. This returns additional test statistics, such as p-value and effect size in the result dataset. The contrast vector is pass on to the

t_test()function (r_matrixargument) of the StatsModels result class.>>> usm = UnivariateStatsModels(exog, model_gen, res=[1,0], ... add_constant=True) >>> res = usm(endog) >>> print res <Dataset: 6x2@float64, <sa: descr>> >>> print res.sa.descr ['tvalue' 'pvalue' 'effect' 'sd' 'df' 'zvalue']

F-test for a contrast matrix, again with additional test statistics in the result dataset. The contrast vector is pass on to the

f_test()function (r_matrixargument) of the StatsModels result class.>>> usm = UnivariateStatsModels(exog, model_gen, res=[[1,0],[0,1]], ... add_constant=True) >>> res = usm(endog) >>> print res <Dataset: 4x2@float64, <sa: descr>> >>> print res.sa.descr ['fvalue' 'pvalue' 'df_num' 'df_denom']

For any custom result extraction, a callable can be passed to the

resargument. This object will be called with the result of each model fit. Its return value(s) will be aggregated into a result dataset.>>> def extractor(res): ... return [res.aic, res.bic] >>> >>> usm = UnivariateStatsModels(exog, model_gen, res=extractor, ... add_constant=True) >>> res = usm(endog) >>> print res <Dataset: 2x2@float64>

Methods

Parameters: exog : array-like

Column ordered (observations in rows) design matrix.

model_gen : callable

Callable that returns a StatsModels model when called like

model_gen(endog, exog).res : {‘params’, ‘tvalues’, ...} or 1d array or 2d array or callable

Variable of interest that should be reported as feature-wise measure. If a str, the corresponding attribute of the model fit result class is returned (e.g. ‘tvalues’). If a 1d-array, it is passed to the fit result class’

t_test()function as a t-contrast vector. If a 2d-array, it is passed to thef_test()function as a contrast matrix. In both latter cases a number of common test statistics are returned in the rows of the result dataset. A description is available in the ‘descr’ sample attribute. Any other datatype passed to this argument will be treated as a callable, the model fit result is passed to it, and its return value(s) is aggregated in the result dataset.add_constant : bool, optional

If True, a constant will be added to the design matrix that is passed to

exog.enable_ca : None or list of str

Names of the conditional attributes which should be enabled in addition to the default ones

disable_ca : None or list of str

Names of the conditional attributes which should be disabled

null_dist : instance of distribution estimator

The estimated distribution is used to assign a probability for a certain value of the computed measure.

auto_train : bool

Flag whether the learner will automatically train itself on the input dataset when called untrained.

force_train : bool

Flag whether the learner will enforce training on the input dataset upon every call.

space : str, optional

Name of the ‘processing space’. The actual meaning of this argument heavily depends on the sub-class implementation. In general, this is a trigger that tells the node to compute and store information about the input data that is “interesting” in the context of the corresponding processing in the output dataset.

pass_attr : str, list of str|tuple, optional

Additional attributes to pass on to an output dataset. Attributes can be taken from all three attribute collections of an input dataset (sa, fa, a – see

Dataset.get_attr()), or from the collection of conditional attributes (ca) of a node instance. Corresponding collection name prefixes should be used to identify attributes, e.g. ‘ca.null_prob’ for the conditional attribute ‘null_prob’, or ‘fa.stats’ for the feature attribute stats. In addition to a plain attribute identifier it is possible to use a tuple to trigger more complex operations. The first tuple element is the attribute identifier, as described before. The second element is the name of the target attribute collection (sa, fa, or a). The third element is the axis number of a multidimensional array that shall be swapped with the current first axis. The fourth element is a new name that shall be used for an attribute in the output dataset. Example: (‘ca.null_prob’, ‘fa’, 1, ‘pvalues’) will take the conditional attribute ‘null_prob’ and store it as a feature attribute ‘pvalues’, while swapping the first and second axes. Simplified instructions can be given by leaving out consecutive tuple elements starting from the end.postproc : Node instance, optional

Node to perform post-processing of results. This node is applied in

__call__()to perform a final processing step on the to be result dataset. If None, nothing is done.descr : str

Description of the instance

Methods

-

is_trained= True¶