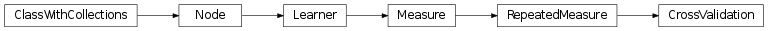

mvpa2.measures.base.CrossValidation¶

-

class

mvpa2.measures.base.CrossValidation(learner, generator, errorfx=<function mean_mismatch_error>, splitter=None, **kwargs)¶ Cross-validate a learner’s transfer on datasets.

A generator is used to resample a dataset into multiple instances (e.g. sets of dataset partitions for leave-one-out folding). For each dataset instance a transfer measure is computed by splitting the dataset into two parts (defined by the dataset generators output space) and train a custom learner on the first part and run it on the next. An arbitray error function can by used to determine the learner’s error when prediction the dataset part that has been unseen during training.

Notes

Available conditional attributes:

calling_time+: Nonedatasets: Store generated datasets for all repetitions. Can be memory expensivenull_prob+: Nonenull_t: Noneraw_results: Nonerepetition_results: Store individual result datasets for each repetitionstats: Summary statistics about the node performance across all repetitionstrained_dataset: Nonetrained_nsamples+: Nonetrained_targets+: Nonetraining_stats: Summary statistics about the training status of the learner across all cross-validation fold.training_time+: None

(Conditional attributes enabled by default suffixed with

+)Methods

Parameters: learner : Learner

Any trainable node that shall be run on the dataset folds.

generator : Node

Generator used to resample the input dataset into multiple instances (i.e. partitioning it). The number of datasets yielded by this generator determines the number of cross-validation folds. IMPORTANT: The

spaceof this generator determines the attribute that will be used to split all generated datasets into training and testing sets.errorfx : Node or callable

Custom implementation of an error function. The callable needs to accept two arguments (1. predicted values, 2. target values). If not a Node, it gets wrapped into a

BinaryFxNode.splitter : Splitter or None

A Splitter instance to split the dataset into training and testing part. The first split will be used for training and the second for testing – all other splits will be ignored. If None, a default splitter is auto-generated using the

spacesetting of thegenerator. The default splitter is configured to return the1-labeled partition of the input dataset at first, and the2-labeled partition second. This behavior corresponds to most Partitioners that label the taken-out portion2and the remainder with1.enable_ca : None or list of str

Names of the conditional attributes which should be enabled in addition to the default ones

disable_ca : None or list of str

Names of the conditional attributes which should be disabled

node : Node

Node or Measure implementing the procedure that is supposed to be run multiple times.

callback : functor

Optional callback to extract information from inside the main loop of the measure. The callback is called with the input ‘data’, the ‘node’ instance that is evaluated repeatedly and the ‘result’ of a single evaluation – passed as named arguments (see labels in quotes) for every iteration, directly after evaluating the node.

concat_as : {‘samples’, ‘features’}

Along which axis to concatenate result dataset from all iterations. By default, results are ‘vstacked’ as multiple samples in the output dataset. Setting this argument to ‘features’ will change this to ‘hstacking’ along the feature axis.

null_dist : instance of distribution estimator

The estimated distribution is used to assign a probability for a certain value of the computed measure.

auto_train : bool

Flag whether the learner will automatically train itself on the input dataset when called untrained.

force_train : bool

Flag whether the learner will enforce training on the input dataset upon every call.

space : str, optional

Name of the ‘processing space’. The actual meaning of this argument heavily depends on the sub-class implementation. In general, this is a trigger that tells the node to compute and store information about the input data that is “interesting” in the context of the corresponding processing in the output dataset.

pass_attr : str, list of str|tuple, optional

Additional attributes to pass on to an output dataset. Attributes can be taken from all three attribute collections of an input dataset (sa, fa, a – see

Dataset.get_attr()), or from the collection of conditional attributes (ca) of a node instance. Corresponding collection name prefixes should be used to identify attributes, e.g. ‘ca.null_prob’ for the conditional attribute ‘null_prob’, or ‘fa.stats’ for the feature attribute stats. In addition to a plain attribute identifier it is possible to use a tuple to trigger more complex operations. The first tuple element is the attribute identifier, as described before. The second element is the name of the target attribute collection (sa, fa, or a). The third element is the axis number of a multidimensional array that shall be swapped with the current first axis. The fourth element is a new name that shall be used for an attribute in the output dataset. Example: (‘ca.null_prob’, ‘fa’, 1, ‘pvalues’) will take the conditional attribute ‘null_prob’ and store it as a feature attribute ‘pvalues’, while swapping the first and second axes. Simplified instructions can be given by leaving out consecutive tuple elements starting from the end.postproc : Node instance, optional

Node to perform post-processing of results. This node is applied in

__call__()to perform a final processing step on the to be result dataset. If None, nothing is done.descr : str

Description of the instance

Methods

-

errorfx¶

-

learner¶

-

splitter¶

-

transfermeasure¶