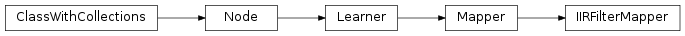

mvpa2.mappers.filters.IIRFilterMapper¶

-

class

mvpa2.mappers.filters.IIRFilterMapper(b, a, **kwargs)¶ Mapper using IIR filters for data transformation.

This mapper is able to perform any IIR-based low-pass, high-pass, or band-pass frequency filtering. This is a front-end for SciPy’s filtfilt(), hence its usage looks almost exactly identical, and any of SciPy’s IIR filters can be used with this mapper:

>>> from scipy import signal >>> b, a = signal.butter(8, 0.125) >>> mapper = IIRFilterMapper(b, a, padlen=150)

Notes

Available conditional attributes:

calling_time+: Noneraw_results: Nonetrained_dataset: Nonetrained_nsamples+: Nonetrained_targets+: Nonetraining_time+: None

(Conditional attributes enabled by default suffixed with

+)Methods

All constructor parameters are analogs of filtfilt() or are passed on to the Mapper base class.

Parameters: b : (N,) array_like

The numerator coefficient vector of the filter.

a : (N,) array_like

The denominator coefficient vector of the filter. If a[0] is not 1, then both a and b are normalized by a[0].

axis : int, optional

The axis of

xto which the filter is applied. By default the filter is applied to all features along the samples axis. Constraints: value must be convertible to type ‘int’. [Default: 0]padtype : {odd, even, constant} or None, optional

Must be ‘odd’, ‘even’, ‘constant’, or None. This determines the type of extension to use for the padded signal to which the filter is applied. If

padtypeis None, no padding is used. The default is ‘odd’. Constraints: value must be one of (‘odd’, ‘even’, ‘constant’), or value must beNone. [Default: ‘odd’]padlen : int or None, optional

The number of elements by which to extend

xat both ends ofaxisbefore applying the filter. This value must be less thanx.shape[axis]-1.padlen=0implies no padding. The default value is 3*max(len(a),len(b)). Constraints: value must be convertible to type ‘int’, or value must beNone. [Default: None]enable_ca : None or list of str

Names of the conditional attributes which should be enabled in addition to the default ones

disable_ca : None or list of str

Names of the conditional attributes which should be disabled

auto_train : bool

Flag whether the learner will automatically train itself on the input dataset when called untrained.

force_train : bool

Flag whether the learner will enforce training on the input dataset upon every call.

space : str, optional

Name of the ‘processing space’. The actual meaning of this argument heavily depends on the sub-class implementation. In general, this is a trigger that tells the node to compute and store information about the input data that is “interesting” in the context of the corresponding processing in the output dataset.

pass_attr : str, list of str|tuple, optional

Additional attributes to pass on to an output dataset. Attributes can be taken from all three attribute collections of an input dataset (sa, fa, a – see

Dataset.get_attr()), or from the collection of conditional attributes (ca) of a node instance. Corresponding collection name prefixes should be used to identify attributes, e.g. ‘ca.null_prob’ for the conditional attribute ‘null_prob’, or ‘fa.stats’ for the feature attribute stats. In addition to a plain attribute identifier it is possible to use a tuple to trigger more complex operations. The first tuple element is the attribute identifier, as described before. The second element is the name of the target attribute collection (sa, fa, or a). The third element is the axis number of a multidimensional array that shall be swapped with the current first axis. The fourth element is a new name that shall be used for an attribute in the output dataset. Example: (‘ca.null_prob’, ‘fa’, 1, ‘pvalues’) will take the conditional attribute ‘null_prob’ and store it as a feature attribute ‘pvalues’, while swapping the first and second axes. Simplified instructions can be given by leaving out consecutive tuple elements starting from the end.postproc : Node instance, optional

Node to perform post-processing of results. This node is applied in

__call__()to perform a final processing step on the to be result dataset. If None, nothing is done.descr : str

Description of the instance

Methods