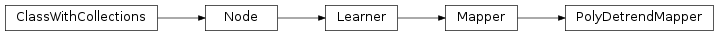

mvpa2.mappers.detrend.PolyDetrendMapper¶

-

class

mvpa2.mappers.detrend.PolyDetrendMapper(polyord=1, chunks_attr=None, opt_regs=None, **kwargs)¶ Mapper for regression-based removal of polynomial trends.

Noteworthy features are the possibility for chunk-wise detrending, optional regressors, and the ability to use positional information about the samples from the dataset.

Any sample attribute from the to be mapped dataset can be used to define

chunksthat shall be detrended separately. The number of chunks is determined from the number of unique values of the attribute and all samples with the same value are considered to be in the same chunk.It is possible to provide a list of additional sample attribute names that will be used as confound regressors during detrending. This, for example, allows to use fMRI motion correction parameters to be considered.

Finally, it is possible to use positional information about the dataset samples for the detrending. This is useful, for example, if the samples in the dataset are not equally spaced out over the acquisition time-window. In that case an actually linear trend in the data would be distorted and not properly removed. By setting the

inspaceargument to the name of a samples attribute that carries this information, the mapper will take this into account and shift the polynomials accordingly. Ifinspaceis given, but the dataset doesn’t contain such an attribute evenly spaced coordinates are generated and this information is stored in the mapped dataset.Notes

The mapper only support mapping of datasets, not plain data. Moreover, reverse mapping, or subsequent forward-mapping of partial datasets are currently not implemented.

Examples

>>> from mvpa2.datasets import dataset_wizard >>> from mvpa2.mappers.detrend import PolyDetrendMapper >>> samples = np.array([[1.0, 2, 3, 3, 2, 1], ... [-2.0, -4, -6, -6, -4, -2]]).T >>> chunks = [0, 0, 0, 1, 1, 1] >>> ds = dataset_wizard(samples, chunks=chunks) >>> dm = PolyDetrendMapper(chunks_attr='chunks', polyord=1)

>>> # the mapper will be auto-trained upon first use >>> mds = dm.forward(ds)

>>> # total removal all all (chunk-wise) linear trends >>> np.sum(np.abs(mds)) < 0.00001 True

Available conditional attributes:

calling_time+: Noneraw_results: Nonetrained_dataset: Nonetrained_nsamples+: Nonetrained_targets+: Nonetraining_time+: None

(Conditional attributes enabled by default suffixed with

+)Methods

Parameters: space : str or None

If not None, a samples attribute of the same name is added to the mapped dataset that stores the coordinates of each sample in the space that is spanned by the polynomials. If an attribute of that name is already present in the input dataset its values are interpreted as sample coordinates in the space that should be spanned by the polynomials.

polyord : int, optional

Order of the Legendre polynomial to remove from the data. This will remove every polynomial up to and including the provided value. For example, 3 will remove 0th, 1st, 2nd, and 3rd order polynomials from the data. np.B.: The 0th polynomial is the baseline shift, the 1st is the linear trend. If you specify a single int and the

chunks_attrparameter is not None, then this value is used for each chunk. You can also specify a different polyord value for each chunk by providing a list or ndarray of polyord values with the length equal to the number of chunks. Constraints: value must be convertible to type ‘int’. [Default: 1]chunks_attr : None or str, optional

If None, the whole dataset is detrended at once. Otherwise, the given samples attribute (given by its name) is used to define chunks of the dataset that are processed individually. In that case, all the samples within a chunk should be in contiguous order and the chunks should be sorted in order from low to high – unless the dataset provides information about the coordinate of each sample in the space that should be spanned be the polynomials (see

spaceargument). Constraints: value must beNone, or value must be a string. [Default: None]opt_regs : None or list(str), optional

List of sample attribute names that should be used as additional regressors. An example use would be to regress out motion parameters. Constraints: value must be

None, or value must be convertible to list(str). [Default: None]enable_ca : None or list of str

Names of the conditional attributes which should be enabled in addition to the default ones

disable_ca : None or list of str

Names of the conditional attributes which should be disabled

auto_train : bool

Flag whether the learner will automatically train itself on the input dataset when called untrained.

force_train : bool

Flag whether the learner will enforce training on the input dataset upon every call.

pass_attr : str, list of str|tuple, optional

Additional attributes to pass on to an output dataset. Attributes can be taken from all three attribute collections of an input dataset (sa, fa, a – see

Dataset.get_attr()), or from the collection of conditional attributes (ca) of a node instance. Corresponding collection name prefixes should be used to identify attributes, e.g. ‘ca.null_prob’ for the conditional attribute ‘null_prob’, or ‘fa.stats’ for the feature attribute stats. In addition to a plain attribute identifier it is possible to use a tuple to trigger more complex operations. The first tuple element is the attribute identifier, as described before. The second element is the name of the target attribute collection (sa, fa, or a). The third element is the axis number of a multidimensional array that shall be swapped with the current first axis. The fourth element is a new name that shall be used for an attribute in the output dataset. Example: (‘ca.null_prob’, ‘fa’, 1, ‘pvalues’) will take the conditional attribute ‘null_prob’ and store it as a feature attribute ‘pvalues’, while swapping the first and second axes. Simplified instructions can be given by leaving out consecutive tuple elements starting from the end.postproc : Node instance, optional

Node to perform post-processing of results. This node is applied in

__call__()to perform a final processing step on the to be result dataset. If None, nothing is done.descr : str

Description of the instance

Methods