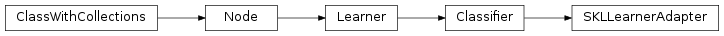

mvpa2.clfs.warehouse.SKLLearnerAdapter¶

-

class

mvpa2.clfs.warehouse.SKLLearnerAdapter(skl_learner, tags=None, enforce_dim=None, **kwargs)¶ Generic adapter for instances of learners provided by scikits.learn

Provides basic adaptation of interface (e.g. train -> fit) and wraps the constructed instance of a learner from skl, so it looks like any other learner present within PyMVPA (so obtains all the conditional attributes defined at the base level of a

Classifier)Notes

Available conditional attributes:

calling_time+: Noneestimates+: Internal classifier estimates the most recent predictions are based onpredicting_time+: Time (in seconds) which took classifier to predictpredictions+: Most recent set of predictionsraw_results: Nonetrained_dataset: Nonetrained_nsamples+: Nonetrained_targets+: Nonetraining_stats: Confusion matrix of learning performancetraining_time+: None

(Conditional attributes enabled by default suffixed with

+)Examples

TODO

Methods

Parameters: skl_learner :

Existing instance of a learner from skl. It should implement

fitandpredict. Ifpredict_probais available in the interface, then conditional attributeprobabilitiesbecomes available as welltags : list of string

What additional tags to attach to this learner. Tags are used in the queries to classifier or regression warehouses.

enforce_dim : None or int, optional

If not None, it would enforce given dimensionality for

predictcall, if all other trailing dimensions are degenerate.enable_ca : None or list of str

Names of the conditional attributes which should be enabled in addition to the default ones

disable_ca : None or list of str

Names of the conditional attributes which should be disabled

auto_train : bool

Flag whether the learner will automatically train itself on the input dataset when called untrained.

force_train : bool

Flag whether the learner will enforce training on the input dataset upon every call.

space : str, optional

Name of the ‘processing space’. The actual meaning of this argument heavily depends on the sub-class implementation. In general, this is a trigger that tells the node to compute and store information about the input data that is “interesting” in the context of the corresponding processing in the output dataset.

pass_attr : str, list of str|tuple, optional

Additional attributes to pass on to an output dataset. Attributes can be taken from all three attribute collections of an input dataset (sa, fa, a – see

Dataset.get_attr()), or from the collection of conditional attributes (ca) of a node instance. Corresponding collection name prefixes should be used to identify attributes, e.g. ‘ca.null_prob’ for the conditional attribute ‘null_prob’, or ‘fa.stats’ for the feature attribute stats. In addition to a plain attribute identifier it is possible to use a tuple to trigger more complex operations. The first tuple element is the attribute identifier, as described before. The second element is the name of the target attribute collection (sa, fa, or a). The third element is the axis number of a multidimensional array that shall be swapped with the current first axis. The fourth element is a new name that shall be used for an attribute in the output dataset. Example: (‘ca.null_prob’, ‘fa’, 1, ‘pvalues’) will take the conditional attribute ‘null_prob’ and store it as a feature attribute ‘pvalues’, while swapping the first and second axes. Simplified instructions can be given by leaving out consecutive tuple elements starting from the end.postproc : Node instance, optional

Node to perform post-processing of results. This node is applied in

__call__()to perform a final processing step on the to be result dataset. If None, nothing is done.descr : str

Description of the instance

Methods